Bridging Isolation: Building MCP Servers for Context-Aware LLMs

Table of Contents

- Understanding LLM Isolation

- What is MCP?

- What is an MCP Server?

- What is an MCP Client?

- Making a Shopping Assistant Aware of Your Data

- Implementation

- Building the MCP Server

- Securing the MCP Server

- Verifying the MCP Server

- Building the MCP Client

- Final Thoughts

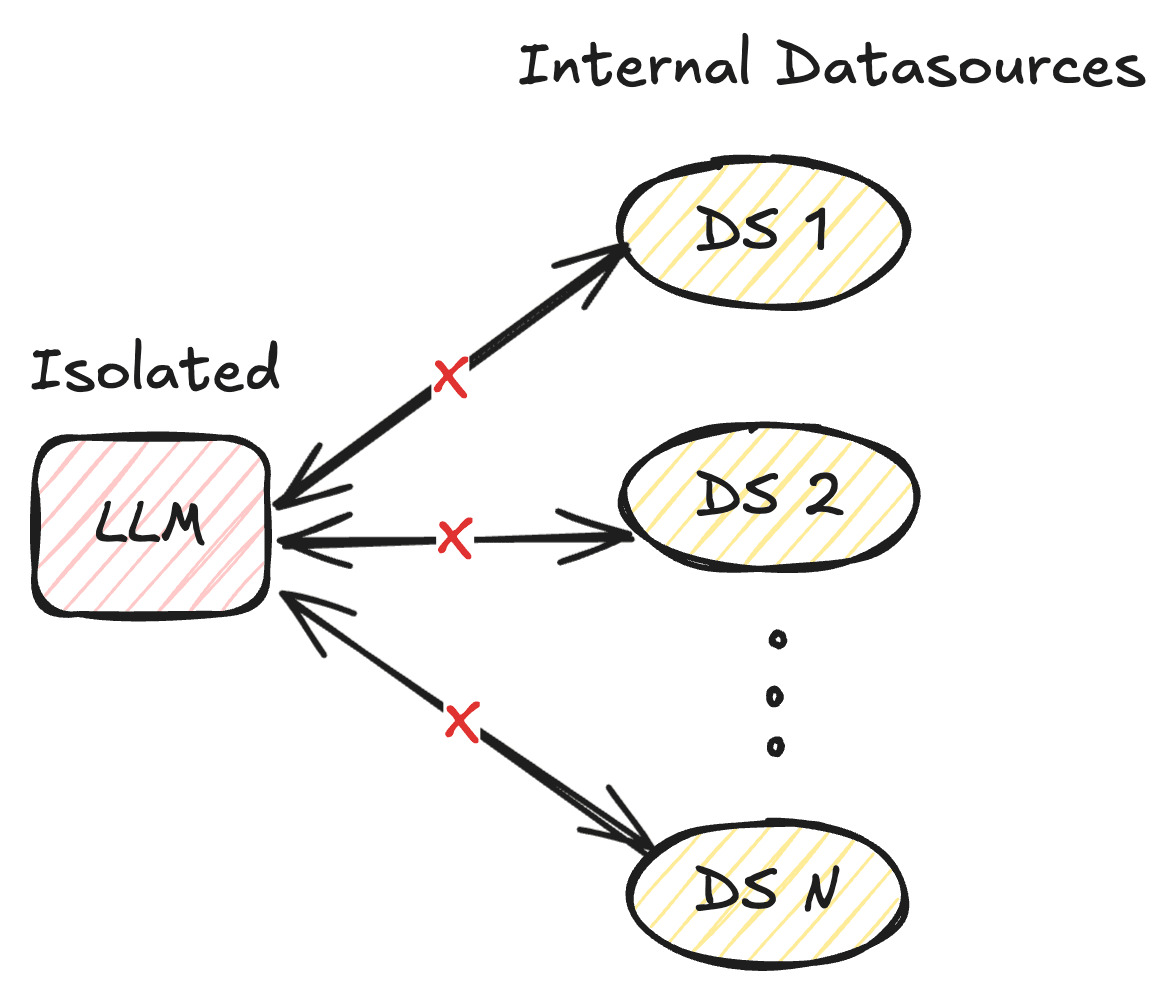

Understading LLM Isolation

As AI assistants become widely used, companies have poured resources into improving model performance, leading to exponential progress in reasoning and quality. However, even the most advanced models remain limited since they’re cut off from real data—locked behind outdated systems and fragmented information sources. Each additional data source required a custom integration, making it extremely challenging to build and scale real-time data aware interconnected systems. [1]

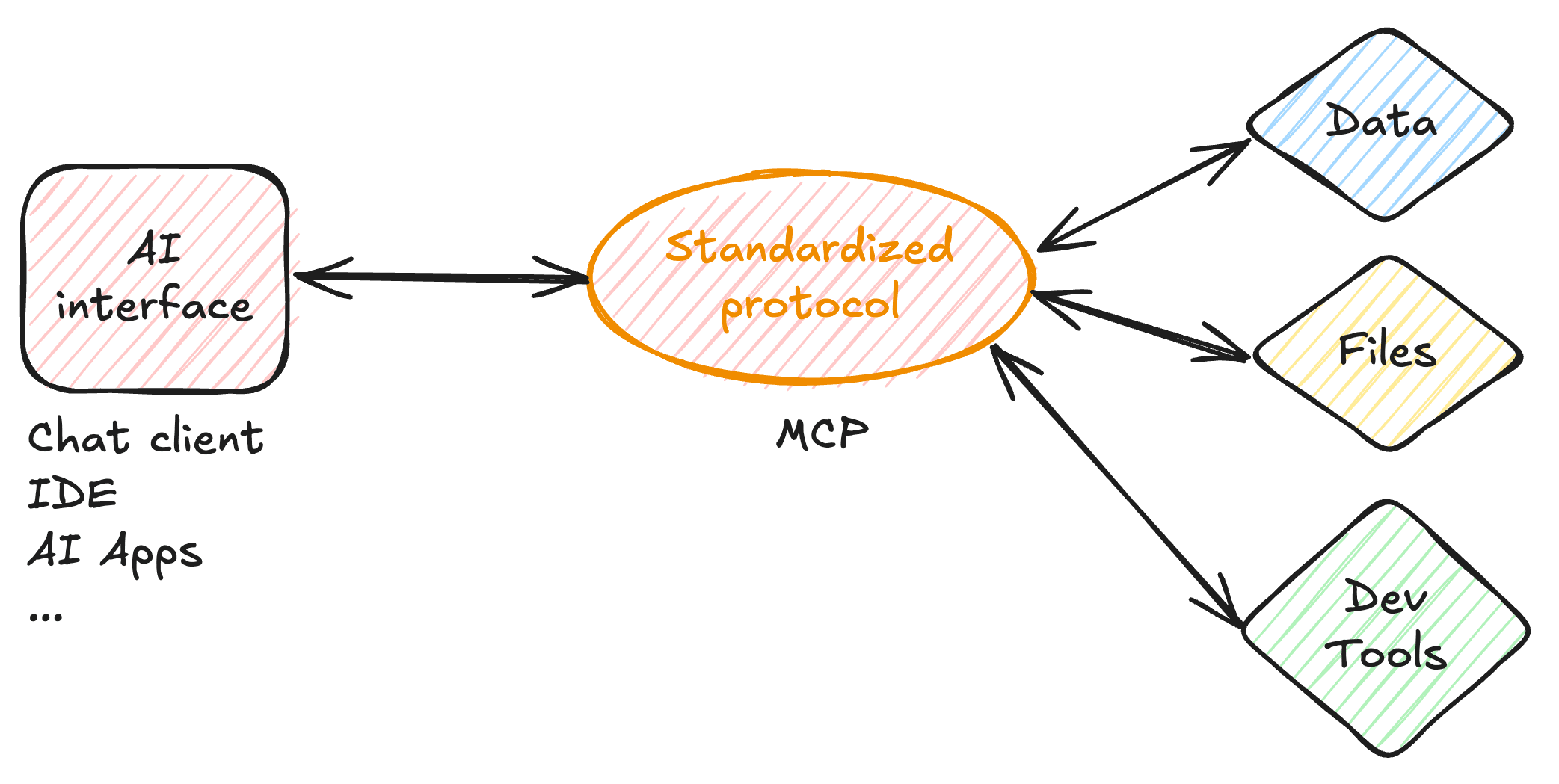

What is MCP?

The Model Context Protocol (MCP) is an open-source standarization that lets AI applications connect with external systems like data sources, tools, and workflows, functioning as a universal connector similar to a “USB-C port” for AI. By offering a consistent way to reach the environments where information is stored, such as content platforms, business software, and developer tools, it aims to help advanced models deliver responses that are extremely accurate and aligned with the relevant context, alongside tools to perform any kind of operations. [1][2]

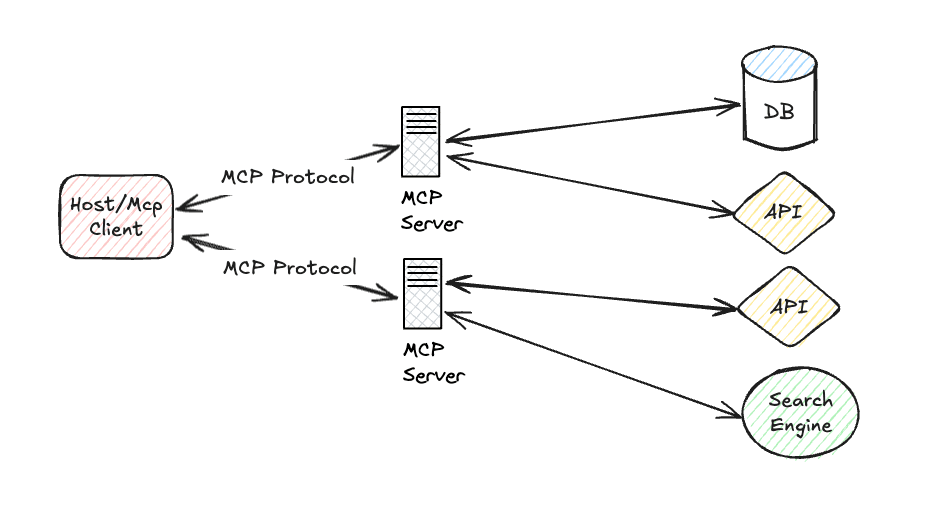

What is an MCP server?

MCP servers are programs that give AI agents a consistent way to access tools, services, and data by translating AI requests into specific commands that those systems understand. Whether it’s pulling files, querying databases, listing GitHub pull requests, saving documents, or transcribing videos, an MCP server acts like an adapter that reveals its capabilities, executes tasks and formats results. These servers let AI assistants tap into new data sources or services in order to perform actions on a user’s behalf. [1][2]

What is an MCP client?

MCP clients are the components within an AI application(even a custom one) that communicate directly with individual MCP servers. While the host app manages the overall actions and may coordinate multiple connections, each client handles one secure, protocol level link to a specific server. The client initiates requests for data or actions, and the server decides what information can be returned, applies privacy and access controls, and responds with filtered results. This model allows AI systems to safely access enterprise data, use tools, and ground their outputs in real time information while maintaining security and governance. [3]

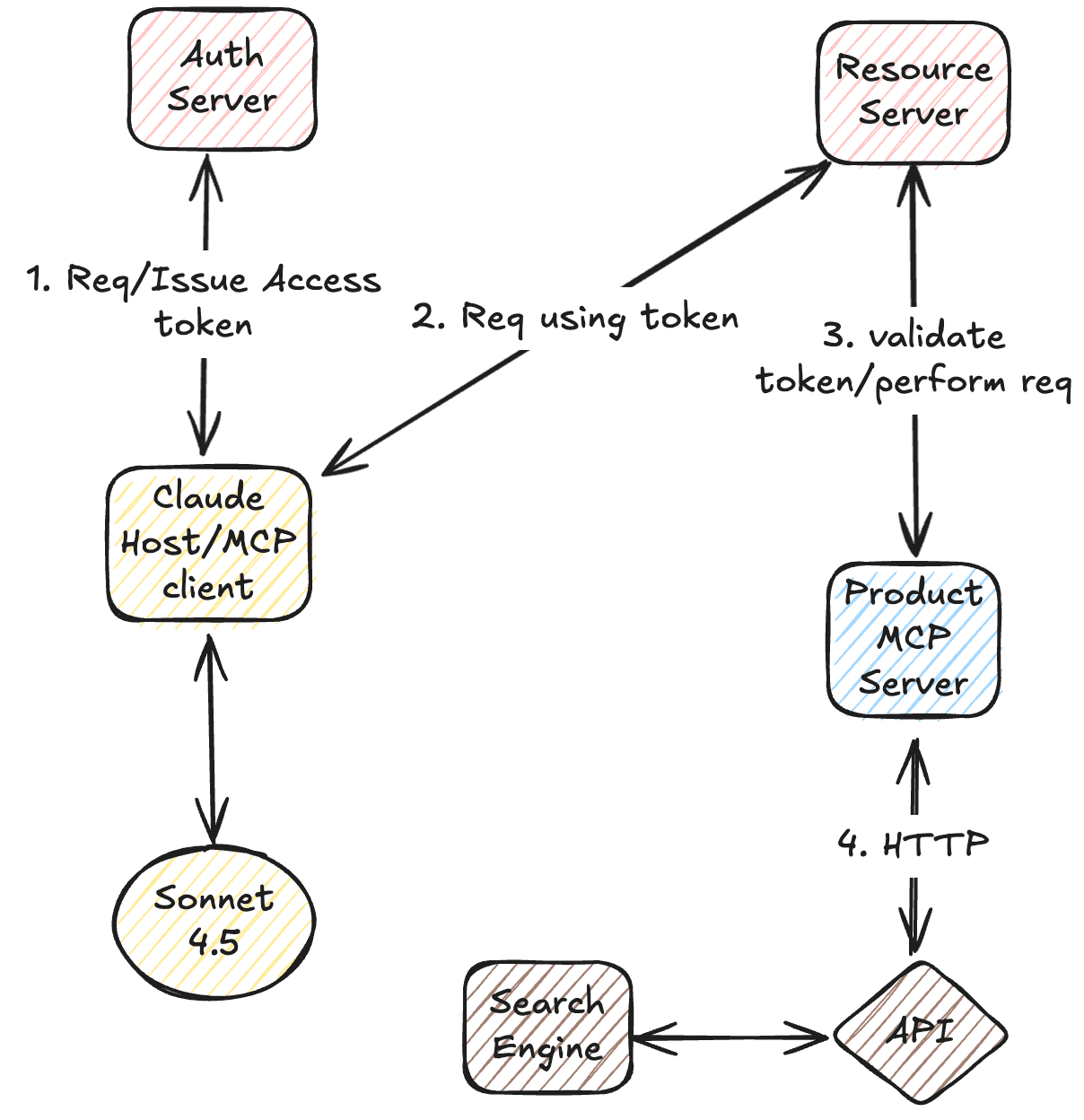

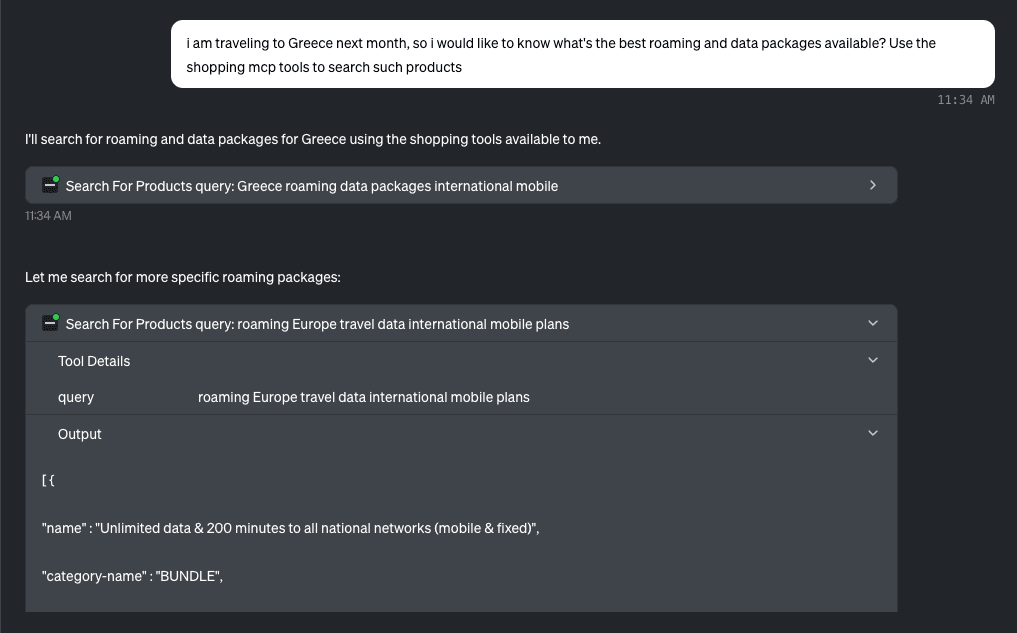

Making a shopping assistant aware of your data

In this example, we’ll build an e-shop assistant that can safely access internal data in real time, use it to generate context-aware responses, and even perform actions on behalf of the user. The MCP server will be implemented with Spring Boot and Spring AI, and secured using Spring Authorization Server together with Spring resource server capabilities. Claude, running SONNET 4.x, will act as the Host/MCP client—but you could also build your own host or client in Spring Boot with any model you prefer. The diagram below illustrates the high-level architecture. [4]

Note: MCP security support in Spring AI is currently a work in progress (see the ongoing documentation). WIP

Building the MCP server

To create an MCP server in Spring Boot, we only need the WebMVC MCP starter along with a few configuration properties. These settings define the MCP endpoint path, enable the streamable HTTP protocol, configure a keep-alive interval for periodic health checks, and assign a unique session cookie name. The cookie name matters because cookies are shared per domain, so when multiple apps run on localhost across different ports, using a distinct cookie prevents conflicts with the client application. [5]

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-mcp-server-webmvc</artifactId>

</dependency>spring.ai.mcp.server.protocol=STREAMABLE

spring.ai.mcp.server.streamable-http.mcp-endpoint=/mcp

spring.ai.mcp.server.version=0.0.1

spring.ai.mcp.server.streamable-http.keep-alive-interval=10s

server.servlet.session.cookie.name=ESHOP_MCP_SERVER_SESSIONNext, we define a dummy tool that the MCP server will expose. In this example, the tool queries an external data source to retrieve products based on criteria inferred by the LLM. The response is simplified to deliver clean, relevant context back to the model. A noteworthy library here is TOON, which helps reduce unnecessary JSON tokens to lower inference costs. TOON

@Tool(name = "search_for_products",

description = "Search for products in the eshop " +

"catalog based on a search query and provide suggestions")

public String searchProducts(String query) {

log.info("Searching for products with query: {}", query);

try {

String encodedQuery = URLEncoder.encode(query, StandardCharsets.UTF_8);

String result = restClient.get()

.uri("/catalog-search?page=0&size=10&query={encodedQuery}", encodedQuery)

.retrieve()

.body(String.class);

return simplifyJson(result);

} catch (Exception e){

log.error("Failed to fetch or parse catalog results", e);

return "{\"error\":\"Failed to retrieve products\"}";

}

}Finally, we register the tool so it becomes available to the MCP server.

@Bean

public List<ToolCallback> tools(CatalogService catalogService) {

return List.of(ToolCallbacks.from(catalogService));

}Securing the MCP Server

To secure the MCP server, we add an OAuth2 Authorization Server and a Resource Server. This setup protects access to the MCP endpoint by requiring valid JWTs. For simplicity, all of the components run within the same Spring Boot application. [6][7]

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-oauth2-resource-server</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-oauth2-authorization-server</artifactId>

</dependency>Here we define some plain dummy secrets in order to generate a token and an alive time for the generated token.

spring.security.oauth2.authorizationserver.client.oidc-client.registration.client-id=mcp-client

spring.security.oauth2.authorizationserver.client.oidc-client.registration.client-secret={noop}secret

spring.security.oauth2.authorizationserver.client.oidc-client.registration.client-authentication-methods=client_secret_basic

spring.security.oauth2.authorizationserver.client.oidc-client.registration.authorization-grant-types=client_credentials

spring.security.oauth2.authorizationserver.client.oidc-client.token.access-token-time-to-live=36000sWith the Authorization Server and Resource Server dependencies in place, we configure Spring Security. The example below requires authentication for all requests, enables JWT validation for the resource server, and disables CSRF for simplicity.

@Bean

@SneakyThrows

SecurityFilterChain securityFilterChain(HttpSecurity http) {

return http.authorizeHttpRequests(auth -> auth.anyRequest().authenticated())

.with(authorizationServer(), Customizer.withDefaults())

.oauth2ResourceServer(resource -> resource.jwt(Customizer.withDefaults()))

.csrf(CsrfConfigurer::disable)

.cors(Customizer.withDefaults())

.build();

}And that’s all that’s needed. The MCP server is now protected behind OAuth2 so only authorized clients can connect.

Verifying the MCP server

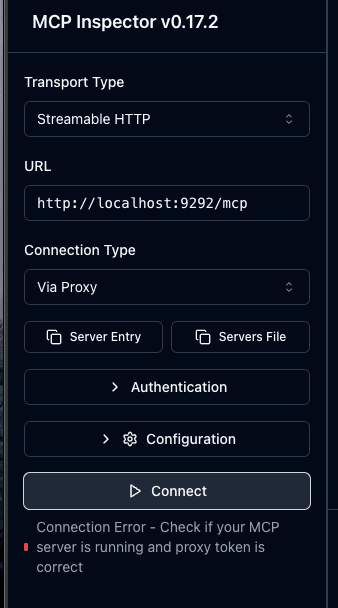

To test that our MCP server is running and properly secured, we can use the MCP Inspector.

npx @modelcontextprotocol/inspector

First, we attempt to connect without providing an access token. As expected, the connection fails because the MCP endpoint is protected.

Next, we request a token from our Authorization Server using the client_credentials flow:

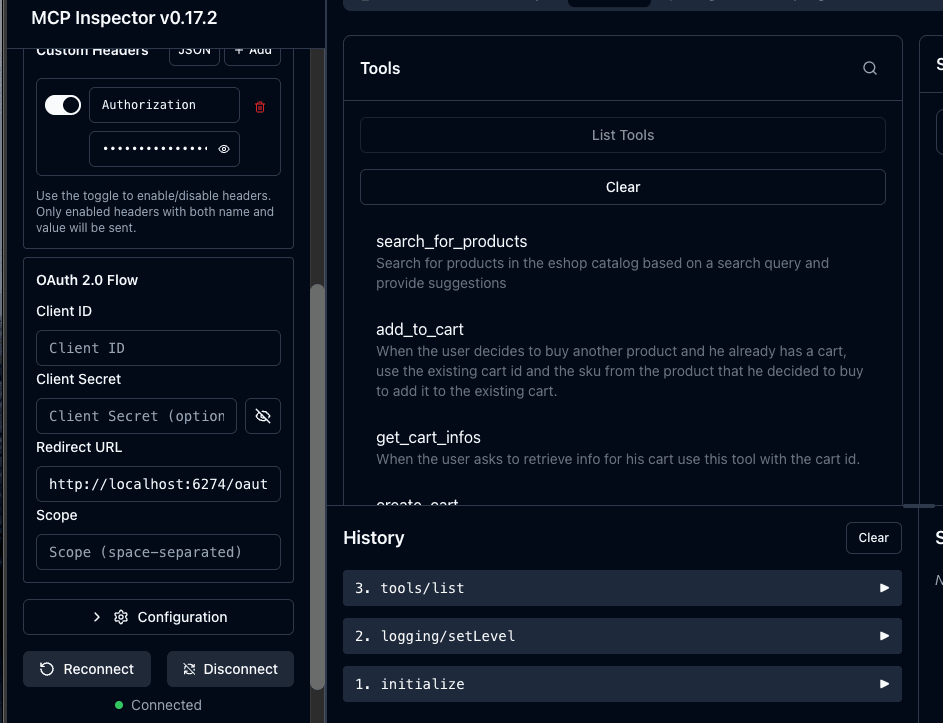

curl -XPOST http://localhost:9292/oauth2/token --data grant_type=client_credentials --user mcp-client:secretThen we try connecting again through the inspector with the token provided

This time the connection succeeds, confirming that the MCP server is secured and we can now view the tools we have defined.

Building the MCP Client

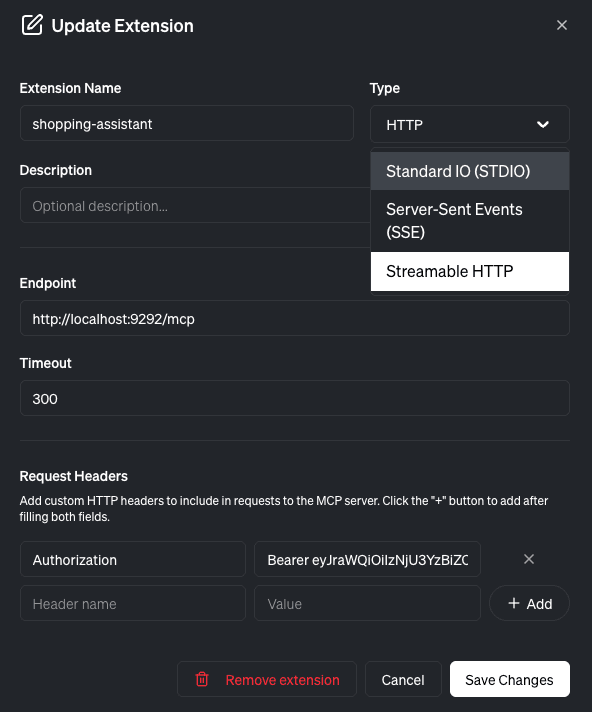

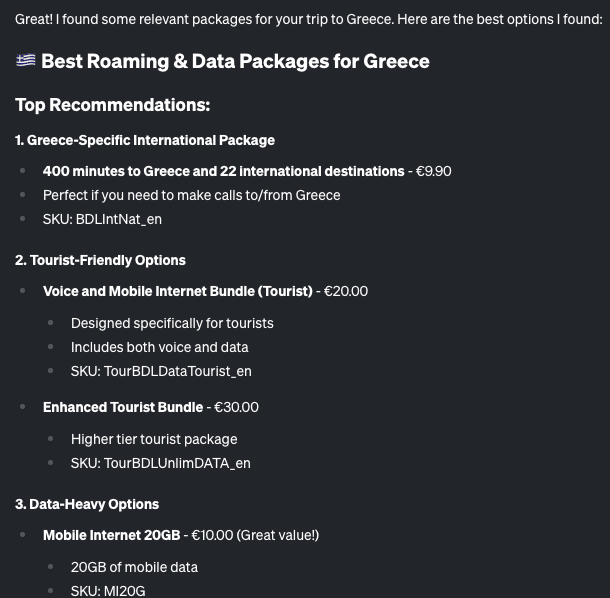

For the final step, we’ll use Goose with an Anthropic API key to launch Sonnet, to create an AI app that leverages an Anthropic model and our MCP server to act as a shopping assistant.

We provide Goose with our generated token and the MCP endpoint so it can securely connect to the server. Once connected, we can query the available tools.

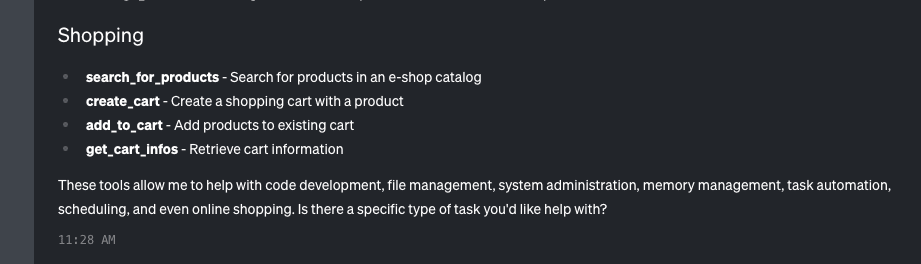

Next, we test the assistant with a simple dummy prompt. An important note here is that proper prompt engineering is a big part of the success of such applications.

The assistant demonstrates awareness of our internal data and responds accurately. It can invoke the tools we defined, like querying the product catalog.

Final thoughts

MCP servers offer a powerful, standardized way to connect LLMs to the tools, data, and services they need. This unified approach unlocks countless opportunities to create smarter, context-aware AI applications that enhance the value of a company’s data—while ensuring strict security and compliance. Just a few years ago, exposing APIs was enough, is this still the case?

References

[1] https://www.anthropic.com/news/model-context-protocol

[2] https://modelcontextprotocol.io/docs/getting-started/intro

[3] https://modelcontextprotocol.io/docs/learn/client-concepts

[4] https://spring.io/blog/2025/04/02/mcp-server-oauth2

[5] https://docs.spring.io/spring-ai/reference/api/mcp/mcp-server-boot-starter-docs.html

[6] https://spring.io/projects/spring-authorization-server

[7] https://docs.spring.io/spring-security/reference/servlet/oauth2/resource-server/index.html